As you know, Part I - Procedure is now published. Within it, I laid out in detail the test, how it was constructed, and how data was collected. Today, we'll embark on the exploration of the data itself. While I will try to conclude with some general points by the end of this post, I will not have had time to analyze everything quite yet. I'm currently anticipating at least another couple of posts to fully flesh out the data set including posting some of the subjective comments made by listeners. I feel this is the only way to properly thank those who took their time and provide as much information as possible to answer any lingering questions.

Let's start... Today, let's focus on the "core" or "headline" results I think most of us are interested in. Who are the people who tested and submitted results? What overall were their preferences? What was the result for each specific track? How confident were the respondents about their choice? And how significant were these findings ultimately?

I. Who were the respondents?

Audiophiles of course :-). As I mentioned last time, the "advertisement" going out for this test were placed in a broad range of audio forums on the Internet. Some of these catered to more "subjectivist" listeners (such as AudioAsylum, maybe ComputerAudiophile), other sites more "objectivist" (Hydrogen Audio, Squeezebox "Audiophile" subforum), and others I would call more balanced (Steve Hoffman).As you can see, this is a worldwide test (with some of these graphs, in general, ignore the "standard deviation" stat which is meaningless - it was just automatically generated by the survey site):

The clear "winner" were Europeans who contributed a full 53% of the results! This is followed by N. Americans with 30%, then Aussies 8%, Asians 6%, and S. Americans 2%. Too bad no representation from Africa and not surprised at the lack of Antarcticans :-). That last point is at least internally consistent with expectations.

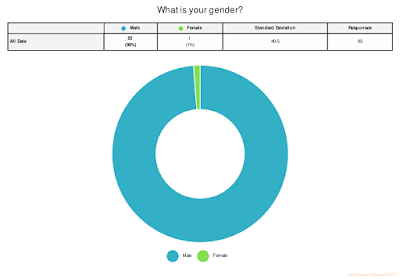

So how about gender of the respondents:

Hmmm, looks like there aren't many women out there in the audiophile world interested in blind tests and/or MQA :-). Only one of the respondents was female - thank you for doing this!

How about age groups?

This I think gives us a good look at the age distribution of computer audiophiles - at least those most likely interested in hi-res digital audio and playback. As you can see, the peak of those who were able to give this test a try with the pre-requisite gear were clearly within the 51-70 age groups constituting 54% of all respondents.

Overall, I see that 60% of respondents used speakers, 46% used headphones which means 6% used both to evaluate the test tracks.

Not surprisingly these days, USB DACs dominate (58%) when it comes to analogue conversion with network streaming (35%) next followed by surprisingly few S/PDIF users these days (12%). Again this is quite reasonable as I think most audiophiles appreciate that asynchronous USB offers better jitter suppression (whether audible is another matter of course).

Looking at OS's, Windows gets 35% which is about three times the Mac OS (12%). Interesting that 16% used some Linux variant including Android-based machines (not surprising since audio streamer machines often run Linux software underneath; such as the Raspberry Pi, Sonore xRendu, etc...).

So how about the cost of the audio systems used to evaluate?

Remember, I'm asking people to exclude the "peripherals" like power conditioners, cables, and the like. It looks like the "sweet spot" for a good 28% of listeners is in the US$2000-$5000 range with a second bump in the US$10,000-$20,000 range. If I look at the system descriptions offered by the testers, I see some really good stuff (no particular order)...

Source: iMac, Raspberry Pi 3, Intel NUCs, Linn Majik DSM/2, Intel i7 PC, various Windows laptops, various Linux desktops & laptops, MacBook (Pro), Naim UnitiQute, Sonore microRendu, Bluesound Node 2, Aria music server, ODROID XU4, Auralic Aries Femto

DAC: Cambridge Audio DACMagic Plus, GD-Audio DAC, Jadis JS2 Mk IV, TEAC UD-501, Oppo BDP-105(D), DIY AKM AK4497 DAC, Rega DAC-R, Accuphase DP720, Oppo HA-1, Fiio X3 II, Burson Conductor V1 & V2, Auralic Vega, Mytek Brooklyn, TEAC UD-301, Schiit Yggdrasil, ASUS Xonar Essence STX, Meridian Explorer, iFi iDSD Nano, Sony NWA-30, Audiolab Q, Chord Mojo, Schiit Modi 2, Emotiva DC-1, Cambridge Audio Azur 851D, Denafrips Aries, T+A DAC 8 DSD, Gryphon DAC One, Resonessence Audio Herus, HiFiBerry DAC+ Pro, Chord QuteHD, dCS Vivaldi "stack"

Amplifiers: Linn Majik 6100, Musical Concepts Hafler mod, mbl 9007 monoblocks, Spectral DMA-150, Bryston 4B SST2, Electrocompaniet, ATC SIA2-150, various Emotiva models, Rogue Audio integrated, Parasound Halo A21, Yamaha A-S500, Benchmark AHB2, BOW Technologies Walrus, Olive Naim 72/Hicap/250

Speakers: Paradigm Studio 100v3, GoldenEar Triton One, Acapella High Violon mk VI, Linn Kaber, KEF LS50, JPW Sonata, Infinity Renaissance 90, Quad ESL 2805, Naim Allae, Thiel CS2.4SE, PMC IB2i, Genelec 8030B, Focal Alpha 80, Magnepan 3.6/R, Celestion A2, Monitor Audio Bronze 2, ATC SCM19 v.2, Definitive Technology StudioMonitor 45, Harbeth speakers, Polk TSi 400, Thiel CS3.7, Sonus Faber Guarneri Evolution

Headphones: Sennheiser HD650, Sennheiser HD600, Beyerdynamic DT-250, AKG K240, AKG K601, Audeze LCD-XC, Sennheiser HD800, Sennheiser Momentum, AKG HSC271, Grado SR 80, HiFiMan HE-500, Beyerdynamic DT 770, Audioquest Nighthawk

Whew, quite the list and that's not totally complete (I think everyone gets the idea of the breadth and depth of the gear used)... Many testers listed much more details! Some even posted their own objective equipment test results confirming low noise, high resolution capability :-). I didn't even list some other equipment like high-end NAS storage, pre-amps and headphone amps used here and details around all kinds of power conditioning, cabling, etc. Impressive systems, folks.

From the software side, numerous programs were used from foobar to JRiver to Roon to Media Monkey to Audacity to Audirvana for computers and with streamers everything from Volumio, piCorePlayer, RuneAudio to customized streaming solutions.

We also had a few testers with other musical / production experience as well as those who write audio hardware and music reviews:

17 musicians (20% of all respondents), and 13 (16% of all respondents) working behind the scenes in recordings, mixing and production.

II. What sample - MQA Core decode or standard Hi-Res PCM - did people prefer and with what confidence?

We now get to the moment of truth!TRACK 1: ARNESEN - MAGNIFICAT

As you can see, the majority preferred Sample B which is the Hi-Res Audio version of the song with a 58% preference for the PCM vs. 42% going with the MQA Core decode. Statistically, binomial one-tailed probability of achieving 48 of the same result out of 83 tests with an assumed 0.5 probability of success results in p=0.094 (z=1.32). What threshold one puts for "significance" can be debated, but we can at least say there seemed to be a bias towards the PCM sample.

There are more nuances we need to consider... Like whether this preference still holds true if we consider the level of "confidence" reported by the listeners. Here are the confidence levels rated from essentially no audible difference to "easy to tell the tracks apart":

One way we can calculate a weighted composite result is if we score by awarding 1 point for "No real difference", 2 points for "Slight difference", 3 points for "Moderate difference", and 4 points for "Clear difference". Doing this, we get a weighted composite score of 72 (40%) for MQA and 110 (60%) for standard Hi-Res PCM audio. Percentage-wise, that's a slight gain for Hi-Res audio which means that with confidence level factored in, most listeners still chose the standard PCM as preference.

TRACK 2: GJEILO - NORTH COUNTRY II

Okay, moving on to track number 2.

Wow. You really can't get any more 50:50 than this! The difference is basically the fact that we have an odd number of total responses.

Check out the confidence ratings:

As you can see, the respondents thought the difference in sound between the MQA and standard PCM with this track was even more subtle than with Track 1 above; with a full 73% describing the sound as either no different or subtle!

And what happens when we apply the weighting as above? We get a weighted composite score for MQA of 76 (47.2%) and for the Hi-Res PCM of 85 (52.8%). What this means is that even though the same number of people picked each of the samples, those who picked B (Hi-Res PCM) preferred that to a stronger degree.

TRACK 3: MOZART - VIOLIN CONCERTO IN D MAJOR

Finally track number 3...

Ahh, we see that in this one, there is a preference for MQA Core Decode of 55% versus 45% for the standard Hi-Res PCM. With binomial stats again assuming 0.5 chance of picking the MQA with each trial, p=0.189941 (z=0.878); interesting but not particularly significant.

As above, let's run it through the weighting based on confidence levels as shown here:

This pattern is similar to the confidence rating we see with track 2, the Gjeilo North Country II where we see the majority of people - 67% with this sample - thinking that at best, they were hearing subtle differences and the tracks were "hard to tell apart". With these calculations, we see that the weighted composite score becomes MQA 106 (62%), and PCM 64 (38%). It looks like more people who felt confident about their choice decided to go with the MQA. This is a strong inverse of what we saw with the first track (the Arnesen).

III. What is the final composite score and which of the two techniques did people prefer?

With 3 tracks each person and 83 respondents, we can then pool the whole data set together and determine out of a total of 249 individual comparisons what the final tally looks like:And combined with weighted totals using confidence ratings (remember - 1 point for "No real difference", 2 points for "Slight difference", 3 points for "Moderate difference", and 4 points for "Clear difference"):

Hmmmm... Statistically we're just flipping a coin here!

IV. Impression up to this point...

Well dear readers, in the big picture, it's pretty clear what we're looking at here. There's just no consistent evidence of a significant difference. And even when I include the confidence score in the calculations, there's just no overall conviction in the results to suggest that there's a real preference for the MQA Core decoded version as being somehow superior to the standard Hi-Res PCM when subjected to the same upsampling filter operation (representative of how MQA typically processes the playback).So, let's enumerate the findings into a few general impressions so far:

1. In 2/3 tracks, there was a slight preference towards the PCM track when taking into account the weighting system (Track 2 actually was essentially exactly 50:50 but the standard PCM won out in the confidence weighted adjustments). This finding was certainly not strong nor were the respondents confident about having heard a difference. Remember that this is a "forced choice" situation and would at least potentially capture any "subconscious" decision making.

2. With the data from all the tracks put together, whether unweighted or weighted with confidence data, it's pretty much a 50:50 "guess". The small overall preference towards Hi-Res PCM and the greater preference for MQA Decode in track 3 could very well be a combination of fatigue and response bias towards whatever was playing in selection "B" (positional / order bias). In this test, the standard PCM samples were labeled B 2/3 of the time and MQA was in the "B" position for the 3rd sample. I can imagine people having difficulty hearing a difference and tending to just pick "B" more.

3. From a confidence perspective, on the whole, the majority thought that at best, whatever audible difference was "subtle". Specifically, the final confidence tally looked like this:

If we look only at people who describe hearing "moderate" or "clear" differences, here is how they selected (no weightings applied):

An exact 50:50 coin toss even within the group of listeners who thought they heard significant differences to a moderate or obvious degree. Again, there is no preference towards MQA Core or just standard hi-res PCM playback.

There's more to say in subsequent posts. For the time being, these results likely would be disappointing for those who were expecting the MQA CODEC to sound significantly better (or even just "different"). It puts into question whether the encoding & decoding of the data in MQA really has added in any way to better sound through ostensible mechanisms like "deblurring". It looks like there is no evidence for this using these demo 2L files when we subject both the decoded MQA and Hi-Res PCM to the same upsampling filter. At least we can say the MQA version achieves the same sound as a 24-bit Hi-Res PCM version in a data-compressed package. Depending on which of the 3 tracks, I'm seeing that the MQA file is 60-70% the size of the original 24/88 or 24/96 downsample (remember that MQA Core only unfolds to these 2X samplerates, the rest is upsampling to the "original" samplerate). Remember, the "origami" system reduces the ability for lossless compression algorithms like FLAC to compress even further in the lowest 8 bits so it's not reasonable to expect fully half the file size. 60-70% of original size is very good compression for streaming systems but I think for one's own library, this might not be particularly meaningful given low storage costs.

Considering the length of this post, let me stop here... Although I think the results here are already quite useful in understanding what will likely be the final outcome, there's actually more we can look at. In the next week, what I'll do are some analyses drilling into the subgroups in the data - were musicians and audio engineers more likely to prefer MQA? What about those with more expensive audio systems? Were there more "golden ears" selecting all 3 MQA Decode samples or all 3 Hi-Res PCM samples? Stay tuned.

Have a great week everyone! Enjoy the music...

NEXT: MQA Core vs. Hi-Res Part III: Subgroup Analysis

Excellent analysis and really interesting results, although I don't imagine it's much of a surprise. I'm really interested in the confidence of differentiating samples with regard to age, hopefully that'll be in the next installment.

ReplyDeleteMany thanks for conducting this experiment, I'm sure the forums will be lit up this week!

Cheers

Neal

Nice reading, waiting for part III,thanks.

DeleteThanks guys... Yeah, no big surprise I think but it's good to gather the data and explore whether the impressions pan out...

DeleteArchimago'S Musings: Mqa Core Vs. Hi-Res Blind Test Part Ii: Core Results >>>>> Download Now

Delete>>>>> Download Full

Archimago'S Musings: Mqa Core Vs. Hi-Res Blind Test Part Ii: Core Results >>>>> Download LINK

>>>>> Download Now

Archimago'S Musings: Mqa Core Vs. Hi-Res Blind Test Part Ii: Core Results >>>>> Download Full

>>>>> Download LINK nO

Outstanding!

ReplyDeletesk16

Hi Archimago

ReplyDeleteThanks for your great work. I must say, I have expected a different outcome. I wasn't expecting the nearly 50 : 50 result.

Juergen

Thanks for the note Juergen.

DeleteOf course as an Internet conducted test, standardization and lack of controls could be an issue. It's at least a starting point and maybe in time some folks can run a more rigorous test...

Hi Archimago,

ReplyDeleteGreat piece of work. I m really looking forward to the "industry"'s reaction to this.

I am a friend of minimalist audio reproduction. The fewer format conversions, signal processings, components in the (complete, incl. recording) signal path, the better.

So I am not suprised at the result.

The advertised use case for MQA is getting the maximum sound quality out of a capcity constrained transmission pipe. The comparison files in the zip for the test have roughly the same size, which they should have, being the same length, bitrate and bit depth.

The question I have is whether the MQA test file / PCM (FLAC encoded) prior to unfolding / upsampling had the same size? This would be the case after the two files pass through a capacity constrained communication link.

If yes, then the test is a good test case for the advertised claim.

Could you please briefly elaborate, Archimago.

Thanks a lot

Rudi

Hi Rudy,

DeleteYes, as I noted in the "IV. Impression" section, indeed there is data compression with MQA. To achieve essentially the same sound quality, MQA files before decoding/unfolding is about 60-70% the size of a similar downsampled DXD --> 88kHz or 96kHz file. Personally as discussed in a previous post, I see no real value in 192+kHz audio.

This is good for streaming with bandwidth reduction. But I'm not sure whether I would ever want to buy MQA files since it is "lossy" in comparison considering how inexpensive hard drive storage space is. I'd much more likely grab a true "flat" 24/96 and not worry whether the compression technique affects any audible nuances. I personally do not believe the digital filtering used by MQA at 88kHz/96kHz is audible.

Considering that I found no great difference in audibility here in terms of "deblurring" and whatnot, other than that specific low bandwidth, data compression purpose, I don't see any other benefit to MQA.

Hi Archimago

ReplyDeleteThanks for your great work. I'm quite surprised by the result because the lack of consistence in the answers. I found the clearly best sound (clarity, perspective etc) in B, A and B (the non-MQA-versions) especially on track 2 and my wife who has younger better ears found the same in repeated blinded tests. We answered together in my name. I've repeated blind listening tests on another high quality system with the same result. We thought that everyone else with a good equipment would hear the same. If responders hear a true difference between the 2 formats it should be consistent and they should have the same format preference in all 3 examples. It could be interesting to see have many who gave consistent answers and which format they preferred.

Thanks for your initiative and your great homepage.

Peter

Thanks Peter,

DeleteMuch appreciate your diligence :-).

To me, it's simply a reflection of the "razor's edge" when it comes to the subtleties and then each of us having difference subjective preferences. If you answered BAB in that order, then you liked the PCM Arneson, MQA Gjeilo, and MQA Mozart. That's cool...

Now that the MQA files are identified, I have some interesting personal interpretations, including 3 blind tests taken a few days ago (before the files were identified) with my wife, daughter, and son-in-law.

ReplyDeleteI was an official survey participant, voting exactly with the unweighted majority, slightly preferring the MQA files for all but the choral (first) selection. What is interesting is that I have the 2L SACDs of all but the choral test selection, and commented in my official survey that my choices (MQA) sounded more like the original SACDs, with which I was familiar (but had not played in a while). I do not usually listen to choral music, and chose the hi-res file for that case.

My son-in-law also picked 2 (as it turned out) MQA files as best, the choral piece (and he listens to choral music and opera), and the violin concerto piece (and his mother is an amateur violinist, concertmaster of a local community orchestra).

My wife picked completely opposite selections from me, and my daughter picked completely opposite selections from her husband. These small sample female results were both 2 for the hi-res files, as opposed to 2 for the MQA files for the males, my son-in-law and me. One more point was that only one of us had a strong preference and it was for only one of the test files. My daughter preferred the hi-res choral piece most definitely.

These are, of course, small sample sizes, showing that one can weave interesting stories from such few samples.

Thanks MitchEE,

DeleteCertainly your description and experience here again shows just how subjective these things are. Who knows, maybe there is something to the type of music and whether MQA could convey benefits.

Your comment about SACD is interesting. Subjectively I love SACD jazz as it can convey that smooth late-nite-smoky-jazz-club sense to some recordings even if the ultimate resolution might not be up to 24/96+. :-)

Again, thanks for the test. The results are unsurprising in their conclusion, but still highly welcome.

ReplyDeleteHi SUBIT,

DeleteSurprise or not, I think it's always good to "study" what is found rather than isolated subjective impressions of one or a handful of people!

Fantastic write-up. So much work. And - IMO - all worth it in order to show - again - that MQA is worthless.

ReplyDeleteI am not surprised by the results only because I couldn't definitely decide on the superiority of any of the samples. I am just a typical adult human, not a golden-ear.

ReplyDeleteAs we approach and exceed Red Book CD bit rates/depths we are seeing diminishing human-audible quality gains...even though they may be measurable by sensitive instruments.

I am not disheartened by the results of the test. I own an MQA DAC. I will continue to acquire and listen to full-res and high-res just for the hobby of it. As has been said elsewhere, the quality of recording and engineering of the original source is MUCH more important than greater than Red Book encoding schemes.

[Regarding your work here and in other articles, Archimago, you are a voice of objectivity in this audio sea of subjectivity. Thank you]

Thanks for the note Marlene and James,

DeleteYeah, it's a bit of work but there is joy in at least trying to answer questions rather than just produce more verbiage, hot air, and mere opinions in the audiophile space.

I'm sure there are other situations but I know of few hobbies where so much of it is such pure fluff, advertising and click bait as we see in the world of audio...

Agree James that back in the day, the guys who created CD did their homework. They found a very good point of diminishing returns beyond which benefits are minimal for human perceptual limitations. Technological capabilities obviously overtook biology a long time ago (in human hearing)!

I believe that over the years, more objective folks have actually been quite FAIR towards MQA. I would have been completely willing to accept a the blind test result of MQA showing superiority. It would actually give me pleasure to report if the result turned out to be 70-to-30 in favour of MQA for example and show wonderful z and p values...

Alas, that was not the case.

Nice archimago, keep it up!

ReplyDeleteI have a request, recently Opus 1.2 came out and I would love if you could do a similar blind test with Opus at say 128 kbps. (And maybe throw AAC and Vorbis in as well for good measure). The last time this was done was back in 2014!

http://listening-test.coresv.net/results.htm

Opus 1.2 introduced improvements in SQ, but at lower bitrates (32-48kbps). I'm using 140kbps Opus for daily listening and did many AB comparisons even with high-end headphones (LCD-2, HD-800, HD-650, DT-250) and found no verifiable difference to lossless files.

DeleteThe only ever-so-subtle difference was with Choirs which may have lost a tiny bit of their airiness, but most certainly nothing to ditch Opus for.

MP3, of course fared much worse.

Thanks for the idea Samuel and appreciate the input SUBIT.

DeleteAdmittedly, I think most audiophiles have put lossy CODECs on the backburner in the face of low cost storage... Opus would be a great streaming format if one were to choose lossy in 2017 for music!

Will keep it in mind :-).

Hi Archimago,

ReplyDeleteThese results would seem to place MQA in a similar category to LossyWAV/FLAC. It might be instructive to compare the two, as I suspect that LossyWAV/FLAC probably out-performs MQA in both compression and audible distortion (though the slight MQA distortion is perhaps euphonic to some).

Cheers,

Bob

I prefer to dither source files manually and determine the effective bit-depth by studying the noise floor.

DeleteStill, thanks for bringing this up, never heard of LossyWAV before. What it seems to do is some sort of dynamic bit-truncation based on effective DR of the source material.

Very interesting.

The awesome thing of lossywav is how good it is for transcoding, since the only artifact is noise.

DeleteOh yeah, LossyWAV!

DeleteYou're probably right. For any particular track, we likely can find a very good setting for LossyWAV that will compress better and be just as transparent as whatever "original" resolution it came from.

Of course, we don't typically hear of LossyWAV in the audiophile world because who wants "lossy" after all :-), and the typical audiophile writer would never speak of it since there's also no money to be made on the industrial side even though something like this could be great for size reduction while keeping quality in a streaming context.

Thanks Archimago. One thing less to worry about. Personally I would have liked to have CD Red Book included as well, as I would expect it to be equally indistinguishable.

ReplyDeleteYes Willem.

DeleteI too would love to include 16/44 or at least 24/48 because 24/48 would be about the size of these MQA files which TIDAL seems happy to be streaming.

Hard to do this with an Internet blind test. Way too easy to get untrustworthy results if samplerates were different, bit depths easily discerned, or upsampling easily detected between samples. I'm sure industry sponsors would have loved to see certain outcomes if the blinding were off :-).

I do have a small problem with this test...

ReplyDeleteMost of the DACs that are listed are not capable of decoding MQA. As such the listeners cannot have been listening to decoded MQA, but to a file that is the mutilated version of what MQA claims is the original file.

Hi Menno,

DeleteA couple of things. Remember we're looking at the MQA decode straight out of the software decoder. The only thing here that would be different is the use of iZotope RX 6 upsampling rather than the DAC upsampling found in MQA DACs.

IMO, iZotope RX 6's 64-bit upsampling algorithm would be more precise than your typical MQA DAC.

I agree with Menno van oosten's observation. From all 31 DAC's listed, only 3(!) are capable to decode MQA. Most of applied music sources have the same problem. So therefore this A/B comparison cannot be regarded as a correct and objective MQA core decoded versus high-res. MQA will only work in full core decode mode in an end-to-end MQA streamer + MQA DAC situation. So I am sorry to conlude that this post is incomplete and misleading.

ReplyDeleteAn MQA capable DAC is not a needed for this test.

Deletesee: http://archimago.blogspot.nl/2017/07/internet-blind-test-mqa-core-decoding.html

quoting:

Pre-requisites:

First, this is what you'll need to get the job done right:

A. A good computer / streamer device to play back the hi-res FLAC files. These files are either 24/192 or 24/176.4kHz in order to minimize non-integer sample rate multiples. They are large files with a total download size of ~350MB for the test package.

B. A good high-resolution DAC capable of playing back the above in a bit-perfect fashion. No need for an MQA-capable DAC of course. Make sure that the DAC is not capped at 88.2/96kHz because these files capture the MQA-like digital filter effect with frequencies >44.1/48kHz in order to provide a reasonable facsimile of what an MQA Render DAC provides plus keeping the comparison "apples-to-apples". Also please make sure to turn off extra processing like room-correction, EQ, crossfeed, spatialization, normalization / ReplayGain.

C. A good system capable of high-resolution playback. Good (pre)amps are a must. Likewise, good speakers and/or headphones are essential!

D. Some free time (I suggest around 30 minutes) to run the test & great ears. :-)

One can of course assume that an MQA capable DAC performs better than the emulated effect tucked in a 'standard' high bitrate PCM encoded file.

Hi Solderdude Frans,

DeleteI am aware of all pre-requisites as defined, but the assumption that an emulation of the MQA algorithm will produce the same sound as a MQA-core DAC is per definition incorrect.

With true and certified MQA decoding it is all about the ANALOG end result of the sum of all parameters which are applied to the correction of the digital signal and the behaviour of the DAC itself.

Since MQA co-operates with the record companies, who provide fingerprinted information regarding the (past or present) ADC, DD and DAC tools that have been used during the recording and mastering of the music, an end-to-end solution is the only way to properly test the technology. MQA is much more than just some new filtering and upsampling method. To secure provenance, advanced encryption is used as well. Together with the end-users's DAC behaviour, all this is an inevitable chain of information required for deblurring the music signal.

MQA achieves delivering a time smear of about 10µs for existing digital recordings, with the aim of achieving 3µs for newly recorded material.

This time-smear reduction is the essence and so-called 'paradigm-shift' of what MQA is all about! It makes the MQA propriatry algorithm 'work' and it is the reason why it sounds more natural, analog and even better than a newly 24/192 recording which contains 100µs of time smear.

It means that an honest A/B comparison test between MQA core decoded and non-MQA music can only be performed with a certified MQA DAC.

All efforts made by Archimago are nice to know and to read, but with all respect, worthless with respect to objective auditive comparisons.

It is impossible to emulate a pear to become an apple and then compare such apples'with apples..The emulation proces is handicapped and incomplete.

MQA is a totally different approach towards improving our listening pleasure and it seems to be difficult fo many to understand and accept that this technology has such capability.. The only way to know and understand is to listen to it over a period of time with your own beloved music as I did and still do since one year.

And although MQA offers a lot to me, I still love non-MQA music as well. It is just a nice add-on and sometimes increases my listening pleasure when using Tidal streaming service or downloads.

This article in Sound-On-Sound provide some insight how MQA works, I hope you appreciate:

https://www.soundonsound.com/techniques/mqa-time-domain-accuracy-digital-audio-quality

"MQA achieves delivering a time smear of about 10µs for existing digital recordings, with the aim of achieving 3µs for newly recorded material.

DeleteThis time-smear reduction is the essence and so-called 'paradigm-shift' of what MQA is all about! It makes the MQA propriatry algorithm 'work' and it is the reason why it sounds more natural, analog and even better than a newly 24/192 recording which contains 100µs of time smear."

Pedro,

Please cite/link your references to "time smear" audibility thresholds as an isolated factor, via rigorous, controlled double blind listening tests with adequate statistical analysis. These tests must be for "time smear" audibility only, using music and must account for all confounders, like aliasing distortion, etc, etc, as to isolate "time smear" as the cause/effect....as you are very specifically claiming above.

Thanks in advance Pedro.

I seem to have more trust in the propriatry research which MQA has done over the last years than you have. MQA is a different approach to defining listening to HD music and the only way to judge this is to listen to it carefully. I have lots of respect to genuine statements by leading sound engineers like Bob Ludwig and Morten Lindberg. They have access to the real deal and original mastertapes. When I asked Morten why the 1L.no downloadshop defines their MQA downloads as 'original resolution'I receivd this answer:Hi Peter! "MQA Original Resolution" is quite literally the sample rate and word-length used at each and every recording preserved thru editing mix and mastering into the MQA container. For recordings originating from our latest generation of the Horus AD converter the amount of deblur process is minuscular. For older recordings, the deblur is more substantial. Yes, I would say that MQA is an even more fine-tuned sonic experience of our recordings than the straight PCM. What I find difficult to understand is why so many are afraid to trust their own sonic experiences? It’s my number one priority in masterclasses; not to tell the students what to hear, but to let their mental and physical guard down — be exposed — and trust what they hear. Another genuine observation can be seen over here where Bob Ludwig tells his experiences with the MQA remastering of his old DAT tape recorded album of Steve Reich - at 1:04:00 it starts. the rest of the presentation might provde some answers as well. I do trust their claim and I do have positive listening experience with MQA since 1 year now. It is not just defferent, but better. Listening to Pink Floyd 'Echoes' via Tidal in MQA is a revelation and proved that MQA has unheard regenerative capabilities. https://www.youtube.com/watch?v=QjMjyeJe48U&t=4705s

DeleteOk, thanks for admitting you have absolutely nothing to support your baseless, specious claims of "Time smear" cause/effect audibility Pedro, much appreciated.

DeleteYou are correct Ammar, I cannot prove the claim as made by MQA as written in the Sound On Sound review and elsewhere. It is also not my responsibility to do that. I just listen and try to comprehend what I hear and why my ears and brains seem to appreciate the result. I am actually collecting articles related to Time Coherence and Temporal Resolution and MQA patents just to learn from them. But, as eralier mentioned, MQA will have their reasons not to tell everything. It would be nice to be able to measure the claims of the Time Smear reductions in Air somehow. For me, it's enough to personally witness and decide whether I prefer CD, SACD, HD downloads or MQA and the more Tidal releases, the easier it gets to compare at least the 16/44 FLAC stream against the 24/44, 88, 96, 176, 192 up to 384 MQA unfolds.. If it sounds as High Resolution I am happy with it.

DeletePeter, I don't care what you prefer, aliasing distortion or not. Just beware that there are folks in audio who are technically literate as opposed to audiophiles...and see right through the BS, charlatanry and shilling.

DeleteObviously, MQA now have a big problem with this first(?) real listening test.

DeleteThat's why shills like Peter or Pedro are busy to blur the scene by uttering all kinds of buzz words. It's the strategy of Fear, Uncertainty and Doubt (https://en.wikipedia.org/wiki/Fear,_uncertainty_and_doubt)

I understand the difference between the filters.. that's in the end what it is all about.

ReplyDeleteThat and the DRM.

Archimago's test is about the differences between these 2 codec's.

A PCM 192kHz can 'capture' an MQA decoded signal.

Your concern that the recorded music did not go through an MQA encoder and thus is time smeared doesn't seem valid when the recorded material is coming from a 192kHz master but would be valid when it came from a 44/48kHz master.

I understand your reservations about the test.

The de-blurring stuff is what ruffles many technical feathers and seems a bit nonsensical to those technically mislead people (like me) for recordings other than redbook, which, let's face it, is the vast majority of music recordings out there.

There is one thing that bothers me with the whole 'filter' stuff.

You see... the impulse signal used to show the response of the filter is a signal that absolutely is NOT present in any music signal and can never be recorded with an ADC from any similar analog signal.

It's a bit like the nonsense that DAC's need to be able to produce a perfect square-wave which also does not exist in nature nor in any recording on this planet.

All practical 'fast' impulses in music spans at least several samples (also in redbook) and decays of any natural signals are always slower than any filter.

The filter thus isn't the problem.

ONLY digital test signals and accidental glitches in a digital file are that 'fast' and trigger the filter's response simply because the frequency of that is above that of what can be recorded from an analog source which is limited at the ADC side.

Sure.. an MQA file is smaller in size and easier to stream.

It is partially lossless but the (to me at least) inaudible harmonics are lossy. Of course we cannot hear this at all... harmonics being captured lossy.

The soundonsound article is a nice sales pitch ... nothing more.

One can choose to believe it or have some reservations or doubts about the claims. As is the same for many audio 'claims'.

In the end it is about music being recorded and reproduced.

Mostly this is determined by the sampling frequency and perhaps filter roll-off.

That's what Archimago tried to emulate. The filter character of the reproductive chain. The ADC side of it is 'bypassed' by the recording side being high-res and thus not so 'time smeared'.

And yes.. it is not exactly the same as an MQA decoded original file but very close to it (AFAIK) where the high sample rate can fairly accurately emulate the filter characteristic used in the MQA decoder.

I know Archimago's attempts have the appearance of a witch-hunt against this new format.

A new format many people seem to like and thus prefer to dismiss his findings.

All is well with that for me though.

I could not care less about any format and for 99% of the time am even fine with well recorded music on 48kHz MP3 320kbs format as well.

Doesn't take anything away from MY personal music enjoyment but realize others may have a different POV/preference.

As long as using another format, DAC setting, filter or whatever floats ones boat I am fine with it.

Memory and bandwidth is cheap.

There is little reason not to use 96/24, nor going above it for me..

I don't think a new encoder/decoder is needed at all nor do I particularly care for de DRM. 10 Years ago this MQA thing would have been a real solution.

I see MQA as the Video-2000 and DCC solutions from Philips... nice solutions but too late.

To me MQA is about...well ... money (re-issues of recordings) and DRM (again money).

Not so much about really improved SQ to please the happy few audiophiles.

Of course to others the SQ seems really improved.

To them I say ... enjoy the music even more.

Sorry Solderdude Frans, I stick with my comments as earlier described. The same discussion was going on yesterday on Doug Schneider's Facebook page. I specially appreciated one comment which captured the essence of this crippled attempt to compare PCM vs MQA: Bingo. It's like someone saying "let me try a SACD in my non-SACD CD player. SACD sucks! It doesn't sound any better than CD."

ReplyDeleteYour wrong: this test is more like "let me try a SACD converted to 88.2kHz 24 bits PCM on my network audio streamer".

DeleteNow only find a way to get 24 bits MQA files from Tidal through my SqueezeBox, instead of the truncated 16 bits versions we now get.

Agreeing to disagree works best in these situations.

DeletePedro:

DeleteThat quote about SACD might be true *IF* there was a actual analogy there!

SACD is as you know a DSD stream. So if someone were to somehow play a PCM equivalent through a CD, then of course that doesn't make sense.

But MQA is just PCM with a proprietary compression technique and various upsampling filters to choose from. This is worlds apart from comparing SACD and CD or if you prefer DSD and PCM. You seem to be swallowing the Kool-Aid that MQA is somehow all that different!

Everything about MQA hinges on a belief that the "temporal smearing" is somehow improved and hence "sounds better". This test shows that there is no appreciable "de-smearing" within the MQA Core encoding/decoding that results in a preference for MQA. We are therefore left with the presumption that it is the choice of the 16 filters as demonstrated in my AudioQuest Black "Full Monty" post that is the heart of this "de-blurring".

Yes, those filters can also be tested which I will leave to individual audiophiles to try. Basically they're just slight variants of weak antialiasing settings sent to the DAC (in the case of the Dragonfly Black, an ES9010 chip... Woopie...).

Let's be honest. The reason there are no good technical papers to show audibility is because all of this is based on a theoretical concept. They're selling faith - a belief that this is "revolutionary". IMO the revolution is a mirage.

Peter, you are completely wrong. If there is something "crippled" in this case, it's the way that MQA tries to perusade.

Delete“MQA file ... is partially lossless”

ReplyDeleteJust like a Big Mac is partially vegetarian?

Hi boballen. The interpretation of the lossless part of MQA is many times incomplete. The audible spectrum up to 24 kHz is 100% lossless, but the frequencies above that, which cannot be really heard, but do contribute to our perception of timing information is indeed lossy. This is required in order to keep the bandwith of the MQA stream in the same order of a CD. The most interesting part to me is that the alhgorithm is capable to repair the large degree of time-smear which is being introduced in past and even stil present digital recordings. This means that an MQA file is intrinsically different to the original PCM recording, but can still be playes on non-MQA DAC's since it is transported in a FLAC or WAV container. Best is to try and test for yourself with headphones and a cheap MQA DAC.

DeleteCorrect... The important part (the audible range) is lossless, the ultrasonic range is lossy.

DeleteThis is how MQA makes the files smaller yet sound as good as a lossless 96/22 file.

Also MQA will remove the smallest few bits in a 24 bit original file to accomodate the lossy ultrasonic sounds which are sort-off disguised as noise.

Some people claim this helps with pinpointing sound but there is no objective evidence for this statement.

Those 'ultrasonics disguised as noise' will be removed when MQA decoded and the ultrasonics are decoded and added to the lossless part.

When an MQA file is played back on a 16 bit DAC the 'noise' is truncated anyway so will sound the same as redbook.

Well.. at least when the DAC used has an apodizing filter.

When an MQA file us played back on a normal 24 bit DAC the smallest fex bits are noise but most DAC's will not be able to accurately reproduce 24 bit resolution any way.

There is no "time-smear" to beginn with.

DeleteThe "problem" MQA claims to repair, simply is not existant.

Whenever someone comes up with "problem" like "time-smear", "timing", "jitter", "stair-steps", it's almost everytime sure, they are trying to sell snake oil.

Pedro:

DeleteYes, MQA is "different" from 24-bit PCM in many ways as HDCD was "different" from 16-bit PCM. They both added a decodable component to the least significant bits and were therefore "compatible" with standard playback. All this hype about "breaking" Shannon-Nyquist is simply that there is a decoder that extracts the lossy compressed part into the 2fs frequency range. My goodness... Talk about overselling.

One thing I must correct though:

"This is required in order to keep the bandwith of the MQA stream in the same order of a CD."

MQA is actually the bandwidth of 24/48. I'd love to see a blind test comparing decoded MQA with hi-res 24/48 from a true hi-res source with high dynamic range!

You know Pedro you can the blind test if you want and share your results. You can even do like Archimago did ans asked people on forums to participate and again share your results.

DeleteI would love to attend such a comparison :-)

DeleteVery good work!

ReplyDeleteThey hate those blind listening tests on the Steve Hoffman forums, because they do not trust their ears.

Hi Tim. LOL.

DeletePerhaps they can't trust their MIND either and needs "golden ear" types working *for* the industry to tell them what to think despite the obvious reality and thread-bare nature of the hype!

BTW. I try to be fair to the SH Forum since I do enjoy much of the discussion. There are many very knowledgeable and reasonable folks there as well...

DeleteArchimago, you are right, there are some on that forum with knowledge and reason. But forum rules are against knowledge. Instead, subjective anecdotical listening "experiences" are the official forum rule. Anything objective on Steve Hoffman is against forum rules.

DeleteMy guess, also the guy himself cannot trust his ears anymore, so his mind has to trust the industry snake oil. "So sad."

"Pedro," we know who you are.

ReplyDeleteOthers, "Pedro" is a shill for MQA. He posts on Computer Audiophile as PeterV and runs a Facebook group for MQA promoters. Please ignore him.

Thanks Måns, makes sense ...

Deletewell... the warning does.

MQA ... not so much... nor Pedro.

Was it that obvious? ;-)

DeleteYes. ;-)

DeleteMQA is nothing but a bad joke. Just like HDCD. They try to fix problems that are non existant at all. And, they try to fix it in one of the worst possible ways.

Nobody needs MQA, just like nobody needed HDCD. Is there any new product with HDCD out there?

I have nothing to hide Mans Rullgard. My name is Peter Veth and I do ( did ) post sometimes on computeraudiophile asnd I have started a closed Facebook page for myself, just to collect background articles and stuff related to MQA. Since there is still much scepticism, I keep this closed. Some friends joined. Why you call me 'shill'is also based on prejudice and a childish way to force people twho have a different opinion and a positive opinion about MQA than yours, to shut up. So if anybody should be ignored it is you!

DeleteObviously MQA causes "Name smearing" distortion, where Peter becomes "Pedro".

DeleteBut of course, not for shilling purposes, but rather, "authenticity"

....;)

Delete;-)

DeletePeter Veth denies being paid by MQA, with plausible deniability:

Deletehttps://www.computeraudiophile.com/forums/topic/30381-mqa-is-vaporware/?do=findComment&comment=730140

He rejects any technical argument such as the spectral plot of MQA vs DXD where MQA seriously alters the original.

Also confuses analog with digital, in some comments MQA is an analog algorithm, in other it's digital and needs a lot of DSP power.

Does this guy have any clue what's he talking about? He also claims minimum phase filters are faulty, not realizing these filters are used by dac manufacturers and MQA. Without minimum phase upsampling, there's no MQA.

His canned responses are:

- All hypothesis why DSD or WAV or MQA is better on paper is per definition providing an incomplete picture

- our ears are not digital, but analog, go listen

=> shut up and go buy that MQA dac or product

- I trust (paid) engineers such as Bob Ludwig ...

- time domain argument with canned resources (paid articles)

Do we really believe Peter's latest comment on CA?

"Bob Stuart is a crook..! To be honest: the fact that he personally was fiercely attacked and his replies have always been polite, that motivates me strongly to step up against these dishonest persons, of which most of them have hidden agenda's and are afraid of losing some business. That type of 'format war'and dishonest fighting using dishonest arguments, that is what you should be worried for Chris! "

Why would any normal person with a dayjob become an unofficial defender and spokesperson for MQA? Suppose it's not the case, than Peter Veth has a serious mental issue. Which employer would allow an employee to spend so much time defending a product for which it gains nothing? Officially Peter Veth works / worked for a1-envirosciences gmbh.

It does not make sense unless PeterV/Pedro/Peter Veth is being paid.

When PV can no longer find any arguments to win the discussion, he starts to attack his opponents. We are now in this phase at CA where he attacks other vendors, as he lacks the technical skills to debunk the technical faults presented in MQA. It's also being disclosed PV actually may be paid by MQA, by an MQA partner who spilled the beans.

Any technical argument is responded with "you have no clue". I would suggest that Peter buys a big mirror and starts looking in it.

If Peter Veth was my employee he would already have been fired for wasting the company's time and resources into endorsing non related products.

Hi Frederic vanden Poel or someone who is very close to you ! :-)

DeleteI recognise your language and strange arguments from everywhere. You can accuse me as much as you want, but you are making a fool of yourself. Make as many accounts here and there and everywhere and threat to call my office a1-envirosciences that every time I take a break, I am not doing my official job..haha... Well, you are right, I will proceed my work, coffee was nice, the weather as well, but before I do that, I will post my official and complete reply on Computeraudiophile to Chris Connocker after he sent me a message asking me officially if I am being paid by MQA.. I wonder who did send him a complaint..?? hahaha You are a real jerk, just tell me who you are so I can check if you are being paid correctly by yoiur boss or maybe you are being paid by someone just to reply to my message on CA and place it here, Well I will sho Chris Connocker this story completely . pfffff..!

Chris post first:

HI @PeterV - I'm not a conspiracy theorist, but I have to ask, are you being paid or do you have an affiliation with MQA? Someone brought it to my attention that, "it was disclosed by MQA partners that Peter Veth is getting paid to do this."Please let me know what's up. If you are affiliated with MQA, you need to state that in your post signature line. If you're not, no worries. Either way you can continue to post, as long as everyone knows an affiliation you may have. If you say you don't have an affiliation with MQA and it later comes out that you do have an affiliation with the company, then we'll have issues. Thanks in advance for addressing this issue promptly.

Here’s my answer to Computeraudiophile’s Site Founder Chris Connaker, just half an hour ago, which you have been monitoring eagerly, just to misuse it over here by copying a part of it... boy, oh boy, I pity you!

Hi Chris, Rest assured that I am in NO WAY affiliated or paid by MQA at all. These accusations have been made to me before in past posts on CA and also recently in this 'vaporware' thread. The last time I mentioned that I replied cynically that I am being 'paid' or better said 'rewarded' as all Tidal subscribers with MQA functionality at home are: by an increased sound quality deliverance at the same costs as with a normal Tidal Hifi subscription. It is the same strategy as some persons are trying to do with journalists, sound engineers and other pro-MQA advocates : All those who like MQA or write positively about it are being bribed and paid for..! So childish and foolish. And the most disgusting reply was by someone last year who wrote the even mr. Bob Stuart is a crook..! To be honest: the fact that he personally was fiercely attacked and his replies have always been polite, that motivates me strongly to step up against these dishonest persons, of which most of them have hidden agendas and are afraid of losing some business. That type of 'format war' and dishonest fighting using dishonest arguments, that is what you should be worried for Chris

Quote - Steven Stone (writer TAS) on his Facebook page : Yes, MQA IS a paradigm shift -I record in 5.6 DSD. I had several of my own recordings transferred into MQA so I could compare them. At worst I could hear no audible differences and on a couple of tracks I preferred the MQA version.My recordings are live classical symphony concerts as well as acoustic groups such as the Matt Flinner Trio, Mr. Sun, Choro Das 3, and field recordings. http://www.theabsolutesound.com/articles/let-the-revolution-begin/ So is he part of the large 'shill' conspiracy together with Robert Harley, John Atkinson, Morten Lindberg and Bob Ludwig mr Mans Rullgard? These persons are esteemed journalists and sound engineers and they judge by LISTENING to the real thing. I suggets you should do the same instead of making awkward accusations...!

ReplyDeleteHello Pedro,

DeleteThat's nice for Mr. Stone. Just because a person can be esteemed in what they do within certain circles does not mean they're also free from biased from self interest.

I have no issue against MQA in how it sounds because I have heard it too at this point with many "studio masters" at home, at the local dealer, and at an audio show. As the blind test results show, it's *equivalent* to Hi-Res PCM (remember, as DSD goes up like DSD128/5.6, it actually performs more like Hi-Res PCM with lower noise in the ultrasonics) - how much better does it get? None in my books!

BTW, there's no need for "awkward accusations" if we stick to the facts. Name dropping a few people and what they believe or think they hear (and others think at best sounding subtle)... Or holding a technique in high esteem (even "revolutionary!") because Bob Stuart or Peter Craven were involved without digging deeper into simply what it *IS* unfortunately keeps arguments superficial.

Scratch below the surface and see if there is any depth to these claims. If there is, then present the arguments and facts; I'm not particularly interested in who claims what or "he said she said". Nor do I believe that this kind of argument would appeal to many knowledgeable audiophiles these days. I do hope the audiophile press recognizes this.

Hi Archimago, the problem with proving how MQA works or does not work cannot be found only by emulating and look for answers in the digital domain. The claim MQA makes is that the algorithm is capable to deblur the time-smear by a factor of 100. I do not believe that thi statement is false. You might know the differences between what algorithms do with high-speed HD video deblurring for example and what can be learned to apply the same technology with music signals ( or not) Although your attempt and measurements are of interest, you will have to admit that the audience who listened to your files have not been listening to core encoded MQA files, since over 80% of the participants did not listen with an MQA certified DAC.. What is happening now for more than 2 years is a lot of rumours how it cannot work and is not good at all and a growing group of listeners who do have the hardware to listen carefully. MQA = increasing Time Coherency = redefining what HD music means to our ears and brains. Music is after all an analog phenomenon. Our brains are influenced by many parameters. Q-sound for example and Roger Waters'album Amused to Deeath is a perfect example how psycho acoustics works in another form. MQA is capable to improve the Time Resolution in a proriatry way and it seems to be a psycho acoustic effect as well. It has nothing to do with 13-, 16- or 24 bit, etc. it is a paradigm shift which would be nice if it can be measured in AIR , so in the analoge form. I would applaud if you would proceed and setup a test with high-end microphones and software etc. and really measure if the Time-Smear of a CD in air is indeed 300 milliseconds and 24/192 files still much higher than MQA.. That would be a real breakthrough !

DeleteThe post of PEdro/Peter or what is the name of that MQA shill, is just another B/S namedropping and strategy of Fear, Uncertainty and Doubt.

DeleteFact is: Nobody ever has heared any improvements MQA made over standard PCM. That's for sure.

I see, Pedro...

DeleteSo it comes down to this:

"The claim MQA makes is that the algorithm is capable to deblur the time-smear by a factor of 100. I do not believe that thi (sic) statement is false."

You believe and have faith that there is a time-smear reduction by a "factor of 100" as per the company. You are willing to (verbally, virtually) fight for this but not bring anything new to the table except your faith and a few spurious attempts at analogy. MQA is not "high-speed HD video deblurring" (do you mean some of the interpolation algorithms or sharpening algorithms?), nor is it Q-Sound which as you know doesn't need to be hi-res ("Amused To Death" CD sounds great already).

Remember, MQA Core decoding is what the majority of subscribers are listening to with a desktop TIDAL app already; MQA claims this sounds better does it not? Whether you like the blind test's upsampling algorithm or not, already the results have shown that there is no MQA preference. Remember, MQA even dares to claim that the *undecoded* MQA file sounds "better" than the standard CD track - to the point where they're even reaching down to the bottom of the barrel in bitrate to promote MQA-CDs:

https://www.stereophile.com/content/mqa-encoded-cds-yes

As you can see, they claim:

"In this case, the sound quality is slightly better than a typical CD, because the audio is already de-blurred in the studio."

Obviously with a 16/44 signal off a CD there's just not enough data to reconstruct much if any of the ultrasonic spectrum so all it ends up being is to "unfold" using their weak upsampling filters as per the Dragonfly Black impulse responses thanks to Mans' research work.

So then, the "paradigm" is basically to apply one of 16 custom, weak upsampling filters that allow aliasing artifacts to pass through and we'll achieve deblur by a "factor of 100"? Does this sound revolutionary? Or does your faith require that there's significantly more to this process which MQA has not revealed thus far?

"...deblur the time-smear by a factor of 100"

ReplyDeleteCould you please define what that phrase means?

Please assume that I am familiar with the Shannon-Nyquist Theorem (and any other aspects of digital signal processing needed to understand your explanation), but have no clue what the buzz-phrases and neologisms used by MQA enthusiasts (and their audiophile acolytes) mean.

That Pedro/Peter MQA shill well-behavingly repeats all the B/S phrases and buzz-words from the MQA advertising material.

DeleteThat shill tries to blur the scene with phrases of "not MQA certified", or whatever buzz-words from the copy-writers.

But...

There is nothing like a "Time Smear", to begin with.

MQA claims fixing that non-existant problem of "Time Smear".

But, simply, it's just not there.

Simple as that: No problem, no fix, no need for MQA.

Tim, why do you respond so hostile to someone who has another opinion than you? Why do you incriminate me for being shill? Is it fear that your beliefs and prejudistic opinions are endangerd? Come on! Start doing some research like I do, then you will discover what the definition of Time-Smear is and that this for sure is not a buzz-word!

Delete"When we use impulse responses to analyse the time-domain behaviour of a linear-phase filter, what we find is a response with significant pre- and post-ringing (see Figure 1).The filters employed in a 44.1kHz or 48kHz system typically have ringing tails extending for several hundred microseconds before and after the main impulse. These ‘ringing tails’ act to spread out the signal’s energy over time — often referred to as ‘temporal blurring’ — and it is thought that our sense of hearing may be sensitive to this side-effect. It is also the case that pre-ringing, where sound energy builds up in advance of the sound’s actual starting transient, cannot possibly occur in nature. This could, therefore, be a particularly unnatural and undesirable artifact of existing digital technology."

Read this article and then you will understand a bit better. There is much more out there which will explain what pre- and post-ringing means and how it contaminated ( and still contaminates) digital recordings.

https://www.soundonsound.com/techniques/mqa-time-domain-accuracy-digital-audio-quality

You quote:

Delete"The filters employed in a 44.1kHz or 48kHz system typically have ringing tails extending for several hundred microseconds before and after the main impulse. These ‘ringing tails’ act to spread out the signal’s energy over time — often referred to as ‘temporal blurring’ — and it is thought that our sense of hearing may be sensitive to this side-effect. It is also the case that pre-ringing, where sound energy builds up in advance of the sound’s actual starting transient, cannot possibly occur in nature. This could, therefore, be a particularly unnatural and undesirable artifact of existing digital technology."

You need to read this carefully and with the understanding that this 'ringing' only occurs with signals around and over nyquist.

This means that only some harmonics that are close to the inaudible part of the spectrum can (and will) trigger pre- and or post-ringing at the frequency of that filter.

You should realize that in music signals the harmonics that actually reach those frequencies are very small in amplitude and because these are real world signals also have a certain decay by themselves which will partly mask that ringing as well.

About the pre-ringing. That will occur at the filter frequency which by itself is already at an inaudible frequency.

Especially for the elders (40+) amongst us.

Also when you read the text carefully a few lines stand out.

At least to me.

"... it is thought that our sense of hearing..."

It doesn't say that it DOES... it is believed, like you can believe many things.

"...,This could, therefore, be ..."

The same applies here ... the writer says it COULD he does not write it DOES.

Smart writer ... he isn't lying, just assuming/guessing.

It is easier to fool your brain than not fool it.

And it is even harder to prove it, from both sides of the debate.

"MQA claims fixing that non-existant problem of "Time Smear".

ReplyDeleteBut, simply, it's just not there."

I am willing to discuss whether "it" exists and, if so, whether "it" is a problem. But first I need to know what "it" is.

So, Pedro, before gibbering on any further, please define your terms.

Read my reply to Tim Muller "He who sees clowns and shills everywhere'

DeleteYes. I read your reply (the one above, and several others downthread).

DeleteThe notion of "time-smear," as you've defined it, is nonsense. Or, more accurately, the result of a basic misunderstanding.

It's absolutely true that a PCM encoding with (say) a sample rate of 96 kHz cannot accurately reproduce an analogue audio signal which has significant spectral intensity above the Nyquist frequency (48 kHz, in the case at hand). The reconstructed audio wave-form is not "smeared"; it is bandwidth-limited.

On the other hand, the sort of audio signal (like the impulse response you cited, or a square-wave, or ...) is an artificiality. If you take an actual audio signal produced by a good recording microphone, there is no spectral intensity above 48 kHz. With rare (and expensive) exceptions (like this one) their sensitivity drops precipitously above 20 kHz. Moreover, the source material that most of us prefer to listen to (music, as opposed to breaking glass, or grinding metal) has almost no spectral intensity above 20 kHz, let along 48 kHz, for the microphone to capture.

(This is not to say that if you do a spectral analysis of a 24/172 or higher sample-rate PCM encoding, that you won't find low-level "content" above 48 kHz. Just that that content is electronic noise, not part of the original audio being recorded.)

Given a bandwidth-limited input signal (i.e., an actual recording of actual music), conversion to/from PCM does not introduce any "time-smearing".

I would advise you to have a dicussion with experienced sound engineers like Morten Lindberg who know what time-smear is and what damage it causes to past ( DAT pcm) and even present PCM recordings. It's all about cumulated pre- and post ringing effects. That's why the first CD's sounde so harsh and why many experienced listeners never liked CD and sticked to vinyl. MQA is mow explaining why digital recording used to be so bad. But if it's a bugwprd for you and prefer the pre- and post-ringing definition, that's ok.

DeletePeter, you are continuing to espouse your MQA "Time smear" nonsense even after being exposed as "Pedro" the shill? After admitting there was no "time smear" evidence except in your imagination and the MQA propaganda you parrot?

DeleteThat is, by definition, shilling.

"That's why the first CD's sounde so harsh..."

DeleteNo, that's not why.

"MQA is mow explaining why digital recording used to be so bad."

Nonsense.

"But if it's a bugwprd for you and prefer the pre- and post-ringing definition, that's ok."

Pre- and post-ringing is not an actual problem for actual recorded music (which is naturally bandwidth-limited). It is a made-up problem, for impulse response, square-waves, and other artificial signals, which have significant spectral intensity above the Nyquist frequency.

But thank you for clarifying that that's what you meant. (Solderdude Frans had proffered a different definition (perhaps he was being unnecessarily charitable), involving the phase shift induced by shallow analogue low-pass filters which roll off well below 20 kHz.)

"That's why the first CD's sounde so harsh and why many experienced listeners never liked CD and sticked to vinyl".

DeleteWhat rubbish. Sure some early CDs sound harsh but many sound excellent. For example the 1983 Sony mastered Dark Side of the Moon is still regarded as one of the best sounding masterings of that album.

As for many preferring sticking to vinyl, again rubbish. I know many audiophiles from many walks of life, I deal with them as part of my job. I assure you that those that stick with just vinyl are a very small minority (albeit a vocal and evangelical minority) most are more interested in listening to the best version (ie mastering) of an album, be it vinyl or CD. Most would also agree that all production matters equal, a CD will sound better than vinyl which of course is what one would expect given that there is not one measure of fidelity where vinyl matches, let alone exceeds that of CD. One interesting observation that may be relevant though, is that most of the vinyl only audiophiles I've come across tend to be over 60 (with hearing acuity to match the age profile) and grew up with the coloured vinyl sound.

So what your statement tells me is that you are either a placebophile or associated with MQA or its distribution. I mean apart from these nonsensical statements you make about vinyl, your description of time smear and concern about inaudible pre and post ringing but not concerned with the very audible wow and flutter and phase shifts of analog playback, even suggesting it is superior, is laughable.

Time-smear....

ReplyDeleteSuch a nice phrase..

It exists though and is caused by the brickwall filtering at the ADC side and the DAC side.

Analog and some digital filters can 'time-smear'.

The steeper the filter the more likely time-smear is.

Time-smear is another word for phase response in that specific channel.

Time-smear will be the same for L and R so won't affect the stereo image.

Time-smear is also present in speakers, headphones, amps and even rooms due to phase shifts at higher frequencies (and sometime in lower frequencies as well).

We have a 44kHz sampling freq. Brickwalled at around 20kHz.

As a brickwall needs to remove everything above nyquist (thus have a lot of attenuation at nyquist) the filter 'starts' lower as it has a slope.

You have 2 frequencies, harmonically related thus for instance 1.125kHz and 18kHz.

They both cross the 'null' value at the exact same time in the original signal.

When run through a steep filter the phase shifts for the higher frequencies.

So once captured digitally the phase (timing) may differ from the lower frequency.

The same happens at the DA side (when a similar filter is used) again making it worse.

The reproduced signal thus does not have the exact same phase relation for the highest frequencies.

The time the signals arrive are thus changed.

For higher frequencies and slightly lower frequencies (for the upper harmonic) the time delay will be different and thus 'smeared'.

Time-smear...

Can be measured quite easily and is verifiable.

Depends on the type of filter(s) used.

Same freq... 1.125kHz, 18kHz but the sampling freq is now increased to 176kHz and the brickwall is now set to 70kHz.

The 18kHz will not be 'shifted' in time because the filter operates at a higher frequency.. or at worst will have far less phase shifts and thus no time-smear in the audible range BUT still have time-smear at say 60kHz.

When you are convinced you can hear this you will hear it.

Maybe some people might with test signals and proper gear. The latter may be lacking in tests done by 'researchers'.

All fine and well with a test signal but the problem is music, which is complicated and because of this the phase relation above the audible range has NEVER been proven to exist while using real music. All attempts here failed (to my knowledge)

Don't think (poorly conducted ?) phase tests with a single test signal are 'relevant' to how we perceive music.

have to cut this reply in halve...

MQA also uses different filters, less steep and as Archimago already adressed will leak aliasses.

DeleteNow MQA (during the encoding) uses less steep filters and a higher sample rate.

Thus less time-smear during the recording of the ultrasonics.

The recorded signal thus has the same phase relation (time smear) as a similar sample rate PCM format when a similar (apodizing or close to it) filter is used.

No gain there in time-smear unless the PCM uses a steeper (against aliasing) filter in which case you can MEASURE more time smear.

Of course a subjective found difference may or may not have any real relation to this phenomenon at all. BUT it is very easy to view this as 'the culprit' and thus proclaim that THIS IS the reason.

The reason why MQA claims even non MQA redbook sounds 'better' is because only the reconstruction filter will create the 'time-smear' as the time-smear on the recording side is not present and thus less overall 'smear'.

It thus is a FILTER issue and nothing more. That filter issue is tested with Archimago's test.

But obviously ONLY at the DAC side and only when the filters used in the DAC would have been apodizing or something close to it.

Of course MQA also claims to do extra trickery at the input stage which lowers time-smear.

The way I would do this is by analyzing the phase-shifts at higher frequencies from known gear and simply 'undo' the phase 'damage' in a digital algorithm thereby getting closer to what was originally recorded with steep brickwalls before.

When they did that the 'claim' of reduced time-smear (phase) at the input side is true as the original phase relation is restored.

When using a high sample rate at the DA side using an apodizing filter again then the reduction could be substantial.

The question, however, is how audible this all is ?

Measurable with test signals... sure ... but audible with music ?

Is the brain really capable of analyzing all that input ?

Golden-eared people will say: yes... blind testers may get different results.

Some claim to hear differences even when there aren't any at all.

I have 'tested' people quite a few times and results are often positive.

When people are re-assured quality HAS improved (when nothing changed in reality) they will hear an improvement.

Consider this: Your MQA indicator lights up assuring better quality...

Or to 'believers' the MQA signal = no time-smear and thus technically better = audible better.

Adapt your results to your audio religion I would say.

In the end MQA is launched for a reason... money...DRM.. reselling the same albums all over again.

Technically I have to admit a nice way of going about it.

It is a smaller 'container' with DRM essentially and so-called addressing an issue (time-smear) in the process on paper and in the lab that isn't really problematic in real world audio (my assumption).

You compare ultrasonic sound with sonic sound, in the audible range.

DeleteYou forgot the ears!

Ears cannot hear ultrasonics, so automatically remove every ultrasonic sound, just like a filter would do. The ear automatically adds all the "time-smear" MQA pretends to remove.

If ultrasonic sound is once removed from the audio (during AD conversion), it is not there. Running that audio with the ultrasonics removed through the same filter again and angain, will do nothing to the signal again!

Watch this: Monty's Digita show and tell

https://www.youtube.com/watch?v=cIQ9IXSUzuM

Thanks very much Solderdude Frabs, this is very well summarised. In 2002, before MQA was developped, this opinion regarding Time-Smear:

Delete"The main reason is that the digital audio data residing on a CD is already irreparably time smeared. No amount postprocessing of the digital audio data by the playback system can possibility remove or reduce this time smearing."

- Theory of Upsampled Digital Audio by Doug Rife-

http://www.mlssa.com/pdf/Upsampling-theory-rev-2.pdf

Another pioneer with relation to the importance of time-coherncy in audio, is Dr. Hans van Maanen. He is convinced that SACD is much better than CD and the problems of the CD are all related to the filters like you describe:

"This leaves us with the knowledge that the steep anti-aliasing filtering results in a time-smear of the input signal which is worse than with MM pick-up cartridges (which has been proven to be clearly audible) and that the interaction of amplitude and time quantisation leads to irregular modulated noise contributions, which are likely to give rise to loss of detail'

https://temporalcoherence.nl/cms/images/docs/HighReso.pdf

MQA is probably following the path you describe and are compensating for past and present filter errors using DSP in such a way that the reconstructed analog output indeed has a ( perceived?) reduced time-smear. Whether this is done with an apodizing filter I cannot tell, MQA themselves denie they apply apodisation, but I cannot retrieve this comment when or where I have read this, sorry.

So, if this technology is indeed repairing the 'irrepairable damage' and the original phase relation is restored, than it will be audible as well.

What is need for true testing, measuring, evaluation and emulation, MQA encoded test tones are required I suppose. But since they applied patent for their technology, this will probably not be released.

Anyway, there is a difference in approach for 'believers' like me who meanwhile have long-term listening experience with A/B MQA and other formats in my home situation and others who have not. But I also am curious how it is achieved and not how it cannot be achieved. It is a different approach in which I search for publications, articles etc. and find my confirmation that also experienced sound engineers like MQA.

The analogy with the Q-sound technology I made, is a way to explain that our ears and brain are very sensity to phase ( and time) shifts and DSP technology is nowadays much more advanced than in 2002. I cannot believe MQA is a scam, that all research is BS and all claims by MQA regarding its behaviour in the Time-Domain are litteraly false.. I also cannot beleive that the 'golden-ears' are naive and are either part of this scam or convited believers who do not understand what they (think to) hear. It's like a dicussion that ethernet cable quality has no influence as well, since it is unmeasureable and only blind A/B testing must confirm that something is influencing the sound quality with such cables.

A long time ago I had some fun with the Green paint on the side of a CD.. This is also BS for many.. well I did my personal blind test with friends at the time and it was clarly audible for some and not for others. Interesting.. :-)

Indeed the ears have a steep high-pass filter.

DeleteThat 'ear' filter does not have phase shifts though.

The transducers, however, are less perfect and may well produce audible 'nasties' caused by ultrasonics.

So for me ... 48kHz is enough... 20 bits too.

All ultrasonics are indeed lost when the 'master' is an original CD and will not change the sound.

All that can be done is that the (possible) phase shifts on that CD are '(partly ?) undone'.

I really don't think this is audible though.

There can be advantages in filtering for formats over 48kHz.

Roll-off in the audible range is less likely.

Things may be (technically) different when the original recording was made on DSD or say 96kHz or 192kHz and that master is used to create an MQA file.

That's what I believe is Pedro/Peter's point.

The audibility of that is at least questionable.

In some cases certain measurements may show no appreciable differences yet there could clearly be some audible difference (even in blind tests) when the audible part (for instance phase response) is not included in the set of measurements.

The other way around can also be true. One can have quite measurable differences yet experience no difference in perceived sound quality.

There can also be measurable and audible differences.

Then there are unmeasurable and inaudible differences (in blind circumstances) yet substantial differences in 'sighted' tests.